“A new #TwitterFiles investigation reveals that teams of Twitter employees build blacklists, prevent disfavored tweets from trending, and actively limit the visibility of entire accounts or even trending topics—all in secret, without informing users,” journalist Bari Weiss has revealed, as announced earlier. Weiss is one of the two journalists who received, on behalf of the new management headed by billionaire Elon Musk, classified documents from the old Twitter management via a former FBI lawyer. On 7 December, the two reporters had alleged that this attorney had a dubious track record.

“Twitter once had a mission ‘to give everyone the power to create and share ideas and information instantly, without barriers.’ Along the way, barriers nevertheless were erected,” Weiss wrote on the social media platform.

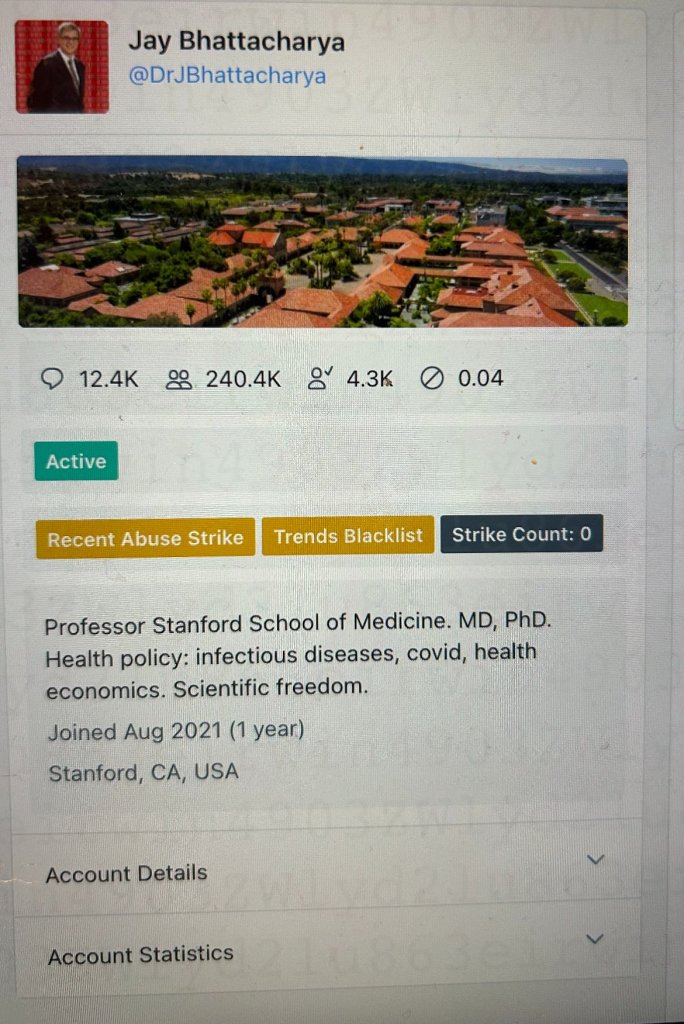

Weiss gave the example of Stanford University’s Dr Jay Bhattacharya (handle @DrJBhattacharya) who had argued that Covid lockdowns would harm children. “Twitter secretly placed him on a ‘Trends Blacklist’, which prevented his tweets from trending,” Weiss found out. She shared the following screenshot:

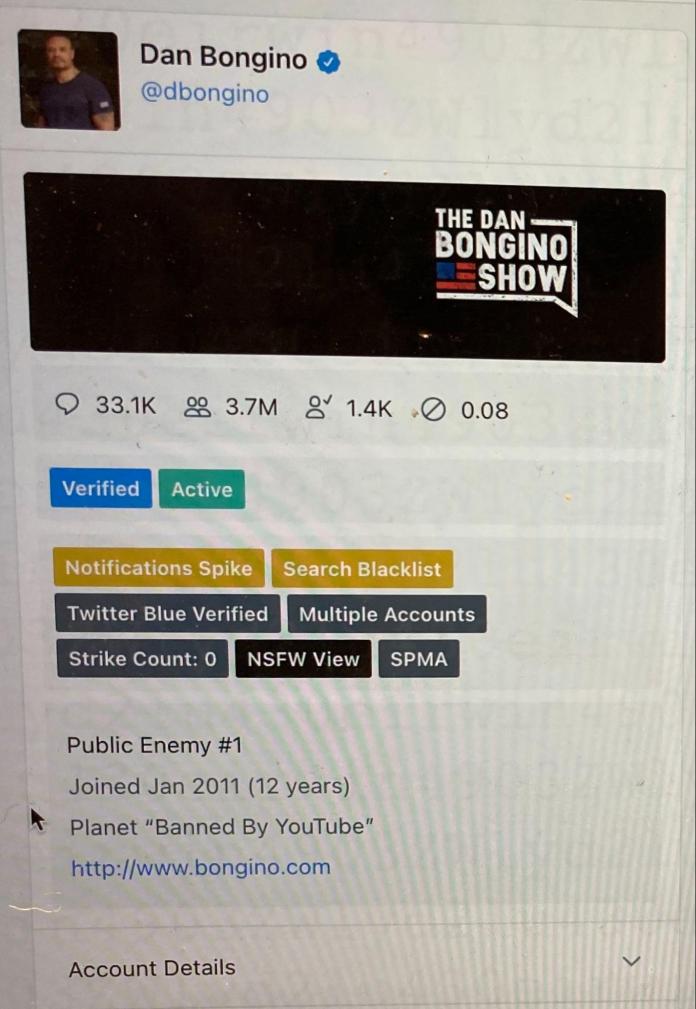

Likewise, popular right-wing talk show host, Dan Bongino (handle @dbongino), was at one point slapped with a “Search Blacklist”, Weiss showed using another screenshot.

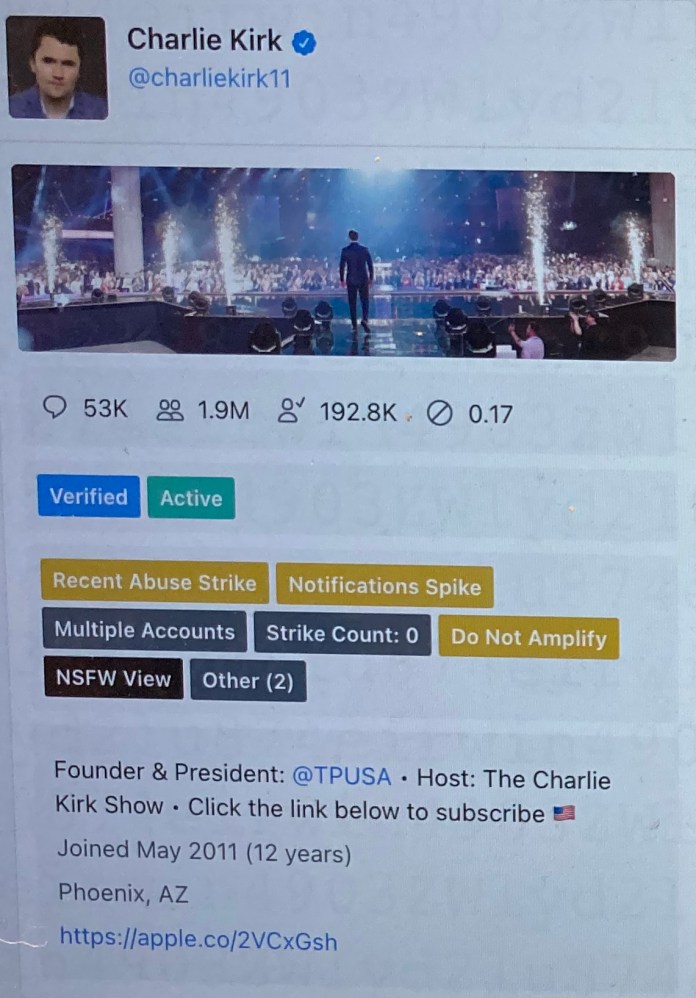

“Shadow-banning”, a term users of Facebook use more to refer to an act of partial censorship by the artificial intelligence software of the medium to prevent the person’s posts from reaching a wide viewership or readership, was in vogue too. Twitter set the account of conservative activist Charlie Kirk (@charliekirk11) to “Do Not Amplify”, Weiss revealed.

Twitter denied that it does such things. In 2018, Twitter’s Vijaya Gadde (then head of legal policy and trust) and, Weiss quoted Kayvon Beykpour (head of product) as saying: “We do not shadow ban.” They added: “And we certainly don’t shadow ban based on political viewpoints or ideology.”

What many people call “shadow banning,” Twitter executives and employees call “Visibility Filtering” or “VF.” Multiple high-level sources confirmed its meaning, the journalist disclosed.

“Think about visibility filtering as being a way for us to suppress what people see to different levels. It’s a very powerful tool,” one senior Twitter employee told Weiss and team.

“VF” refers to Twitter’s control over user visibility. It used VF to block searches of individual users; to limit the scope of a particular tweet’s discoverability; to block select users’ posts from ever appearing on the “trending” page; and from inclusion in hashtag searches, Weiss explained. “All without users’ knowledge,” she asserted.

“We control visibility quite a bit. And we control the amplification of your content quite a bit. And normal people do not know how much we do,” a Twitter engineer reportedly told the team led by Weiss. “Two additional Twitter employees confirmed” this was what Twitter was doing, Weiss claimed.

The group that decided whether to limit the reach of certain users was the Strategic Response Team - Global Escalation Team, or SRT-GET. It often handled up to 200 “cases” a day, Weiss said.

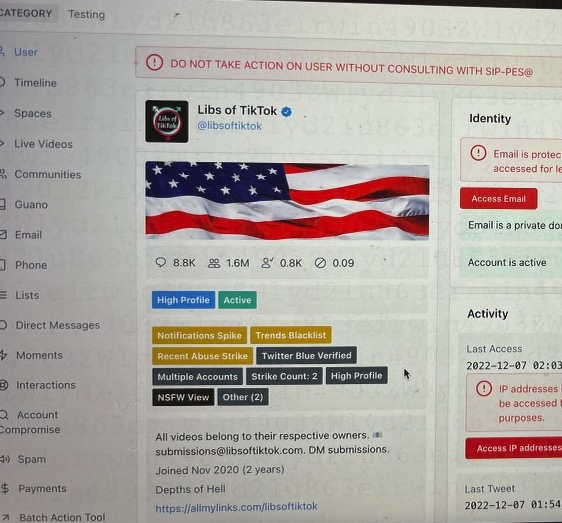

“But there existed a level beyond official ticketing, beyond the rank-and-file moderators following the company’s policy on paper,” Weiss wrote, explaining that is the “Site Integrity Policy, Policy Escalation Support,” known as “SIP-PES”. This secret group included Head of Legal, Policy, and Trust Vijaya Gadde, the Global Head of Trust & Safety Yoel Roth, subsequent CEOs Jack Dorsey and Parag Agrawal, and others, the journalist revealed.

“This is where the biggest, most politically sensitive decisions got made,” Weiss tweeted. “Think high follower account, controversial,” another Twitter employee told her team. For these, “there would be no ticket or anything”, the reporter relayed what she had heard from some former employees now testifying against their former bosses.

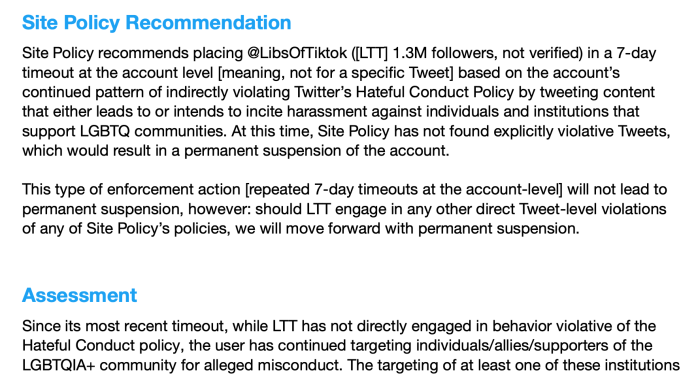

An account that rose to this level of scrutiny was the handle @libsoftiktok, Weiss said. It was on the “Trends Blacklist”, designated as “Do Not Take Action on User Without Consulting With SIP-PES”, she disclosed.

Then, Twitter suspended the account that Chaya Raichik began in November 2020 — it now boasts over 1.4 million followers — six times this year alone, Raichik told Weiss. Every time, Raichik was blocked from posting for as long as a week, Weiss said, adding that Twitter repeatedly told Raichik that she had been suspended for violating Twitter’s policy against “hateful conduct”. However, that was not true.

In an internal SIP-PES memo from October 2022, after her seventh suspension, Weiss showed, citing a page that explains what Twitter was discussing with respect to the account, the committee acknowledged that “LTT has not directly engaged in behavior violative of the Hateful Conduct policy”.

As seen above, the committee justified her suspensions internally by claiming her posts encouraged online harassment of “hospitals and medical providers” by insinuating “that gender-affirming healthcare is equivalent to child abuse or grooming”.

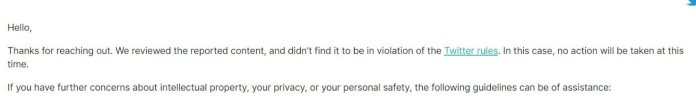

Compare this to what happened when Raichik herself was doxxed on 21 November 2022, Weiss said, adding that a “photo of her home with her address was posted in a tweet that has garnered more than 10,000 likes”.

According to Weiss, when Raichik told Twitter that her address had been disseminated she says Twitter Support responded with this message: “We reviewed the reported content, and didn’t find it to be in violation of the Twitter rules.” No action was taken. The doxxing tweet is still up, the journalist said with proof.

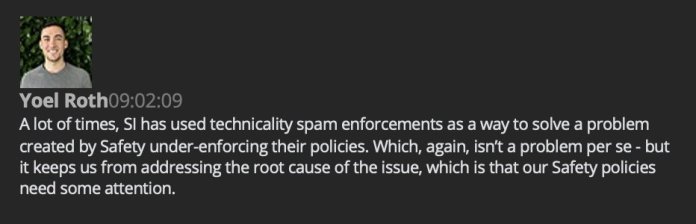

In internal Slack messages, Twitter employees spoke of using technicalities to restrict the visibility of tweets and subjects. Here’s Yoel Roth, Twitter’s then Global Head of Trust & Safety, in a direct message to a colleague in early 2021:

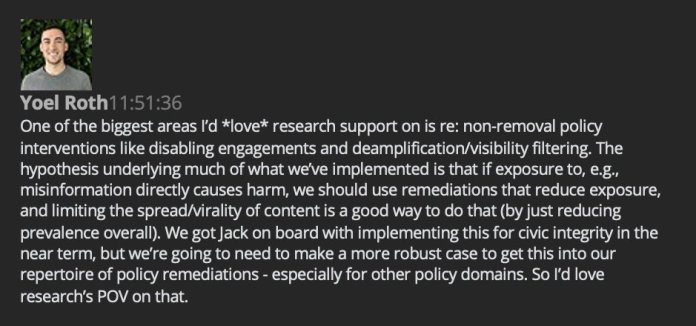

Six days later, in a direct message with an employee on the Health, Misinformation, Privacy, and Identity research team, Roth requested more research to support expanding “non-removal policy interventions like disabling engagements and deamplification/visibility filtering”, Weiss found out.

Weiss quoted Roth as saying, “The hypothesis underlying much of what we’ve implemented is that if exposure to, e.g., misinformation directly causes harm, we should use remediations that reduce exposure, and limiting the spread/virality of content is a good way to do that.”

Roth added, “We got Jack on board with implementing this for civic integrity in the near term, but we’re going to need to make a more robust case to get this into our repertoire of policy remediations – especially for other policy domains,,” Weiss wrote on Twitter.

Promising there would be more to the story, Weiss said that the authors at Free Press had broad and expanding access to Twitter’s files. “The only condition we agreed to was that the material would first be published on Twitter,” she said.

“We’re just getting started on our reporting. Documents cannot tell the whole story here. A big thank you to everyone who has spoken to us so far. If you are a current or former Twitter employee, we’d love to hear from you. Please write to: [email protected],” Weiss concluded, saying that the next episode of the scandal of the previous Twitter management would be published by Matt Taibbi.

You must log in to post a comment.